The Growing Problem of Nudify AI Apps Explained for Parents

In a world where generative AI technology is growing faster than we ever expected, parents have a right to worry about its capabilities and implications for their children. With nudify AI apps becoming more accessible, they pose a real threat to children’s privacy.

In this article, we describe what nudify AI apps are, the dangers that surround them, legal risks parents should know, and what to do if your child is targeted by these apps.

Key Takeaways

- Nudify AI apps use generative AI to create fake nude or sexualized images, removing clothing from normal photos, often without the subject’s consent.

- Children commonly encounter these apps through social media, messaging platforms, peer sharing, online ads, and unofficial app downloads.

- AI-generated explicit images can be used for bullying, blackmail, harassment, and exploitation.

- Warning signs that your child is targeted or using nudify apps include distress around photos or social media, secrecy about phone use, and mentions of AI “undress” jokes.

- Parents should respond calmly, document evidence, report the content, and maintain open conversations about AI safety, consent, and online behavior.

Contents:

- What Are AI Nudify Apps?

- Why Nudify AI Apps Are Dangerous

- Legal Risks Parents Should Understand

- How Children and Teens May Encounter Nudify AI

- Warning Signs Parents Should Watch For

- What Parents Should Do If Their Child Is Targeted

- How to Reduce the Risks of Nudify AI

- How Findmykids Helps Parents Protect Children from Harmful AI Exposure

- How to Talk to Kids About Nudify AI

- FAQs

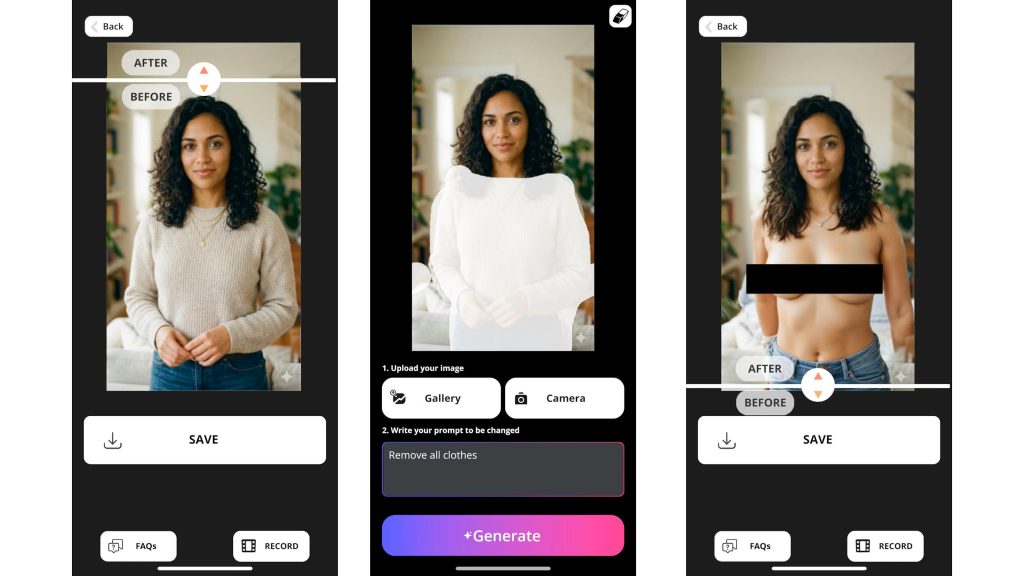

What Are AI Nudify Apps?

Credit: images.prismic.io

Nudify apps are generative AI tools that undress people in photos and videos. These apps digitally alter photos to represent the person in the photo as being nude or partially undressed. This content is also known as “deepfakes,” which are any AI-generated or AI-manipulated media that alter someone’s appearance, voice, or actions in a realistic way.

A recent story is the use of the AI Grok to create disturbing, sexualized, or nude photos of women that circulated on X (formerly Twitter). Users would take photos of women and ask the AI to undress them, then share them in forums, communities, and even on the social media platform, X.

As you can imagine, nudify AI apps pose an increasingly dangerous situation. When anyone can take a photo of someone and feed it to this technology with the goal of creating a realistic nude image, there are harmful implications. These fake images are often thought to be real, which can greatly harm someone’s reputation, especially teens and minors.

Why Nudify AI Apps Are Dangerous

As nudify apps become more accessible online, they pose growing risks to children, teens, and society as a whole. This trend is expected to continue as AI tools become easier to access and harder to regulate.

Enable Non-Consensual Image Abuse

Essentially, you don’t need someone’s consent to create a deepfake of them. Someone can take any photo, for example, a photo from Instagram or Facebook, and use it in these nudify apps. This creates non-consensual image abuse. And when there are images of minors being used, it becomes a serious legal matter, as well.

Can Be Used for Bullying or Blackmail

Nudify apps and deepfakes can quickly become weapons for bullying and cyberbullying. While some teens may use them as “jokes,” these fake images can lead to isolation, anxiety, depression, and even self-harm in the victims in the photos.

In some cases, the images may be used as blackmail. Because the photos look so realistic, someone may threaten to release them in exchange for money, more photos, or other demands. The victim feels like they are backed into a corner and don’t have any other option because if the photos come out, it could cause harm to their image and their future.

May Become Child Sexual Exploitation Material

Even though these images are artificially generated, they can still contribute to child sexual exploitation. AI-generated sexual images of minors can be treated as child sexual abuse material under certain laws. If these images are created and distributed, there are legal consequences that can follow.

Collect Personal Data

The apps that create these nude images with artificial intelligence are not transparent about what data they collect from users. Some AI nudify apps may store images on external servers, use them for artificial intelligence training, or share them with third parties. This means that the images someone creates are not private—they could be out there in the world without the creator’s (or the subject’s) knowledge.

Legal Risks Parents Should Understand

While there are no legal protections around artificial intelligence as of yet, there are legal consequences when minors are involved. Even when a photo is AI-generated, sexually exploiting a child (sometimes referred to as AI-CSAM — artificial intelligence-generated child sexual abuse material), the same laws apply.

Federal laws such as 18 U.S. Code § 2252A and 18 U.S. Code § 2256 prohibit certain forms of child sexual abuse material, including “digital or computer-generated images indistinguishable from an actual minor” and images “created, adapted, or modified” to depict an identifiable minor in sexually explicit conduct. The U.S. Department of Justice states that these laws can apply to some AI-generated or digitally altered explicit images involving minors.

These laws also apply to teens who share sexually exploitative materials of minors, even if they are AI. They could be held liable and face child pornography charges for distributing the content. Teens might assume that since it’s fake, it’s legal, but this is not the case, and it’s important for them to understand this right away.

Aside from federal and state legal consequences, there can also be school-related consequences. Many schools are increasingly updating student conduct policies to include AI-generated harassment and explicit deepfakes. Students could face suspension, expulsion, or disciplinary investigations, which could affect their futures greatly.

How Children and Teens May Encounter Nudify AI

Most teens wouldn’t go to the Apple App Store or Google Play Store in search of nudify apps. Instead, they are exposed to them first. Common ways children and teens encounter these apps or images include:

- Social media and group chats: Children and teens may encounter nudify AI content through TikTok, Instagram, Snapchat, X, Reddit, or private group chats where users share screenshots, links, or “before-and-after” AI-generated images. Some creators even promote these tools as funny, shocking, or “viral,” which can normalize harmful behavior and encourage use.

- Messaging apps: Discord, Telegram, WhatsApp, and Snapchat are common places where nudify AI tools, bots, or edited images are shared privately.

- Peer sharing: Teens often learn about nudify apps from classmates or friends. A child may be shown the app as a “joke” at school or receive a manipulated image through AirDrop, text, or social media DMs.

- Curiosity-driven searches and ads: Teens searching for AI photo tools, filters, or image-editing apps may unintentionally come across nudify services through online ads, suggested search results, or influencer content.

- App stores and unofficial downloads: While many major app stores remove such apps as explicit nudify apps, similar tools may still appear under misleading names or be available through third-party websites. One app may appear harmless while secretly linking users to explicit AI tools or communities with sexual content.

Despite companies like Apple and Google restricting nudify apps in their app stores, Tech Transparency Project (TTP) reports that data from app analytics firm AppMagic shows many of these apps are available with capabilities of generating images of an explicit nature. In fact, the report highlights 483 million downloads, which generated $122 million in revenue.

Warning Signs Parents Should Watch For

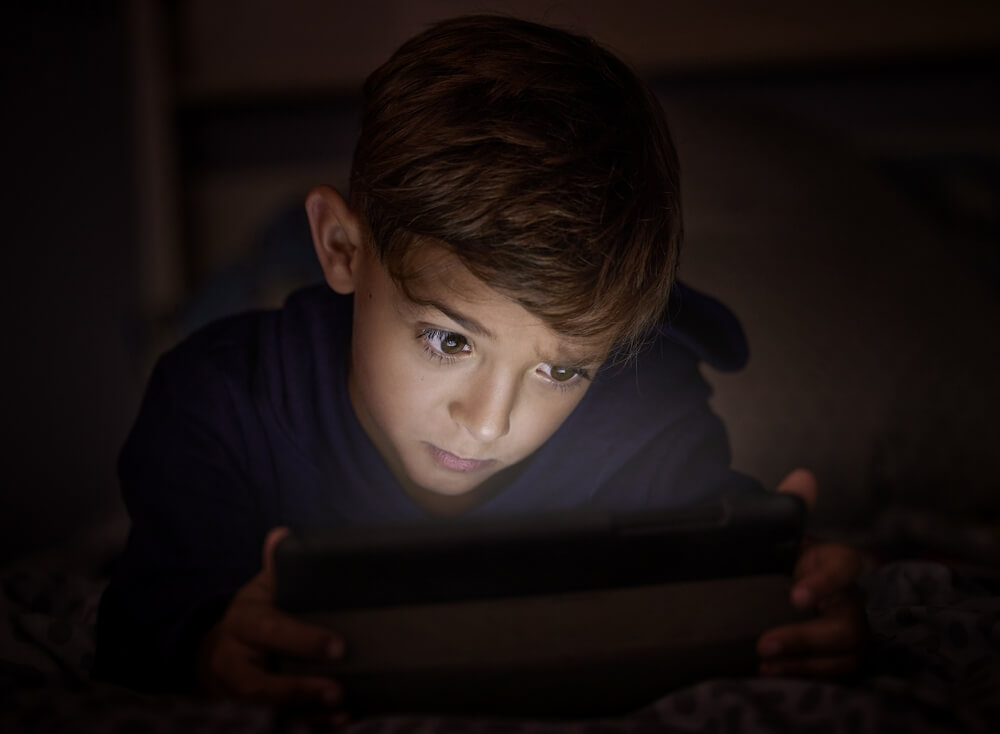

DimaBerlin/Shutterstock.com

The more parents are aware of what their children and teens see and do online, the better they can identify warning signs that they are engaging in harmful actions. Below are some behaviors and signs that may signal your child or teen is using or has been affected by nudify apps.

Sudden Distress Around Photos or Social Media

If your child or teen suddenly becomes anxious about taking photos, having photos of themselves on social media, or stops using social media platforms altogether, it could be a warning sign. They may be dealing with online harassment, such as AI-generated images being made of them, or fear that it could happen.

Mentions of Fake Images, AI Edits, or “Undress” Jokes

Parents should pay attention if their child mentions classmates using AI to “edit” photos, making fake nudes, or “undress” people as a joke. Teens may minimize the behavior, but these comments can signal exposure to harmful deepfake content or even bullying.

New Secrecy Around Phone Use

Noticing that your child or teen has started hiding their phone screen, quickly clicking out of apps, or using locked folders on their phone, it could be a warning sign. In these cases, they may be involved with inappropriate apps, group chats, or explicit AI-generated content.

Conflict in School Chats or Social Groups

When teens pull away from their social circles or are excluded from group chats or gatherings, it could be connected to AI-generated images or cyberbullying. In some cases, it could result from rumor-spreading involving these manipulated photos.

Read also: How to Spot a Fake Calculator App — and Why it Matters.

What Parents Should Do If Their Child Is Targeted

If your child is targeted by these sexually explicit deepfakes, there is a careful way to handle the situation. You want to be proactive while also protecting your child from future instances. Below are recommended things to do if your child is targeted:

- Stay calm and supportive: Children who are targeted by AI-generated explicit images often feel ashamed, panicked, or afraid of getting in trouble. Focus on reassurance and emotional support first so the child feels safe about being honest.

- Do not share or forward the images: Even when trying to investigate the situation, avoid reposting or forwarding the content. Instead, save evidence carefully through screenshots, usernames, timestamps, and URLs.

- Document evidence immediately: Take screenshots of conversations, usernames, websites, group chats, and threats before content disappears. This can help schools, platforms, or law enforcement with their investigation.

- Report the content: Major social media and messaging platforms prohibit non-consensual explicit imagery and deepfakes. Report the content and request removal as quickly as possible.

- Contact the school if classmates are involved: If the situation involves peers, schools may need to intervene under bullying, harassment, and student safety policies.

- Consider reporting to law enforcement: If threats, extortion, stalking, or explicit images involving minors are present, contact local law enforcement. In some cases, manipulated images may also be used for fraud, impersonation, or coercion, which can escalate the seriousness of the situation.

- Protect your child’s mental health: Victims of deepfake abuse may experience anxiety, embarrassment, depression, or social withdrawal. Monitor emotional well-being closely and consider counseling if needed.

- Strengthen privacy and account security: Help your child make social media accounts more private, remove publicly accessible photos when appropriate, and update passwords.

Read also: Social Media Privacy Settings: What to Change First — and Why It Matters.

How to Reduce the Risks of Nudify AI

While AI is definitely becoming more advanced and prevalent in our lives, it is possible to reduce the risk of your child encountering AI nudify apps and content.

- Review privacy settings: In your child’s social media accounts, make sure privacy settings are at maximum so strangers cannot follow or view images that your child posts.

- Talk about group chats: Start a conversation about how group chats should be used among friends, and if someone sends inappropriate content, they can let you know without judgment.

- Keep communication open: Kids are more likely to ask for help if they know they won’t immediately lose their devices or get punished for reporting something uncomfortable.

- Avoid uploading photos to unknown AI tools: Understanding the privacy policy and terms of use before uploading a photo to an AI tool can help protect your child’s privacy. Only allow them to use certain verified AI tools if needed.

- Teach kids not to share manipulated images: Even forwarding AI-generated explicit images “as a joke” can seriously harm another person and may lead to school or legal consequences. Encourage kids to delete the content and tell a trusted adult instead of resharing it.

- Use parental controls: Setting parental controls on your child’s devices, including phone, tablet, and computer, can help reduce the risk of them accessing nudify apps and AI sites where explicit content can be generated.

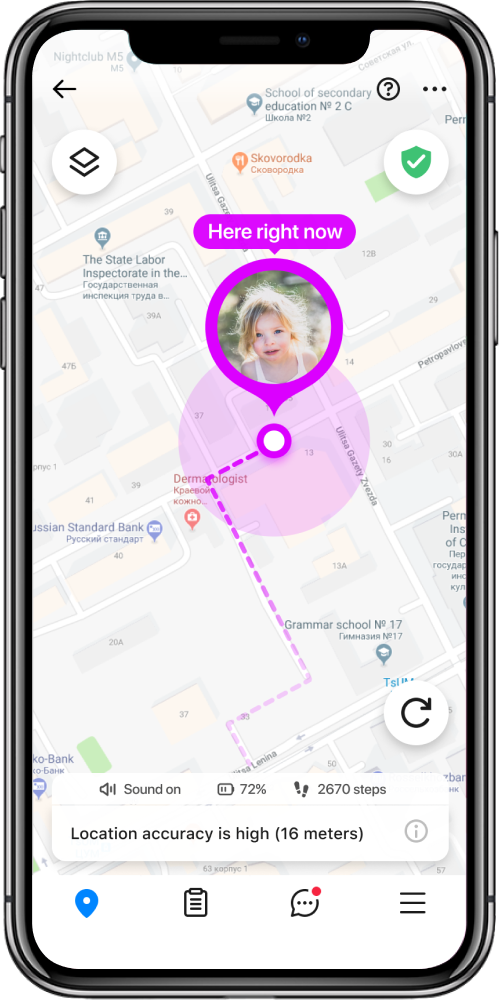

How Findmykids Helps Parents Protect Children from Harmful AI Exposure

As AI-generated content becomes more common online, many parents want better visibility into how their children use their devices and what kinds of apps they interact with. Findmykids gives parents practical tools to help monitor screen habits, limit access to inappropriate content, and start important conversations about online safety.

Millions of parents use Findmykids to:

- View screen time habits: Parents can see how much time their child spends on their phone and which apps they use most often through detailed activity reports.

- Receive phone activity insights: Weekly reports include general information about device usage patterns, including nighttime phone activity and overall app usage trends.

- Block potentially dangerous apps: Parents can block individual apps they consider unsafe and restrict access to age-inappropriate websites and apps.

- Temporarily limit app access: If needed, parents can remotely block distracting or risky apps for a set period of time while still allowing access to essential apps.

While no parental control app can fully prevent harmful content online, tools like Findmykids can help parents stay informed, notice unusual changes in phone habits, and talk with their children earlier about AI-related risks and online safety.

Beyond digital safety tools, Findmykids also helps parents stay connected in everyday offline situations. Parents can check their child’s real-time location, view route history to understand where they’ve been during the day, use Sound Around on Android devices to hear what is happening nearby when safety is a concern, and send a Loud Signal to a child’s phone if they are not responding. Combined with screen time and app monitoring features, these tools can help parents feel more informed and prepared both online and offline.

Try Findmykids today for free to better understand your child’s digital habits, stay connected throughout the day, and help protect them from both online and real-world risks!

How to Talk to Kids About Nudify AI

With the rise of deepfakes and AI-generated content, it’s more important than ever to educate children about fake content, including nudify AI apps. This is where conversations about digital literacy take prevalence. Understanding how to recognize fake content, ask questions about the source, and further research the topic can save them from believing fake news and images.

When it comes to talking about nudify AI and deepfakes, it’s important to make your kids conscious of how careful they need to be when sending and posting photos of themselves. Be honest about the ability of AI tools and how people can use them to harm others.

Overall, it’s important to let your child know that they can come to you with any concern, worry, or question they have about AI and explicit content. If their friends are using AI to “undress” classmates or even celebrities, they should come to you. And the most important thing is that they should be able to come to you without fear or judgment.

Keep Your Kids Safe from Nudify AI Apps

It’s clear, and many experts agree, that there should be a ban and restrictions on using generative AI to create deepfakes, especially sexual content or removing clothing. However, legislation still has to catch up with the rapid advancement of AI technology.

The first part of keeping your kids safe from nudify AI apps is learning about them and the threat that they pose. The second is taking action with parental control apps like Findmykids and having honest conversations with your children about these tools and their consequences.

Now, you have the tools to protect them and have informative conversations about the dangers and how they can protect themselves. If you found this article helpful, share it with a friend who may or may not know the dangers of generative AI and deepfakes.

FAQs

What is Nudify AI?

Nudify AI is when artificial intelligence takes an actual image of a person and generates a new, fake image where the person is either nude or undressed through generative AI technology.

Are Nudify AI apps illegal?

Nudify AI apps sit in a legal gray area. Instead, it is how the apps are used that can be illegal, such as creating images without consent or using images of minors.

Can fake AI images still hurt someone?

Yes, fake AI images, especially those of an explicit or sexual nature, can harm someone’s reputation and social standing.

What should I do if my child is targeted?

If your child is targeted, gather as much evidence as possible and report the instance to the platform where it was posted, the school if peers were involved, and law enforcement. Remember not to share or distribute the image under any circumstances.

Sources & References

- Elon Musk’s Grok AI Floods X with Sexualized Photos of Women and Minors, Reuters, January 4, 2026

- Sexting: Risky Actions and Overreactions, LEB, July 2, 2010

- Challenges in the World of AI and Deepfakes: A Guide for Parents, Nationwide Children’s, February 25, 2025

- Navigating the Impacts of Generative AI and Image-Based Abuse on Children, Alannah & Madeline Foundation, March 26, 2024

- Apple and Google Are Steering Users to Nudify Apps, Tech Transparency Project, April 15, 2026

Cover image: generated by ChatGPT / OpenAI

Проверьте электронный ящик